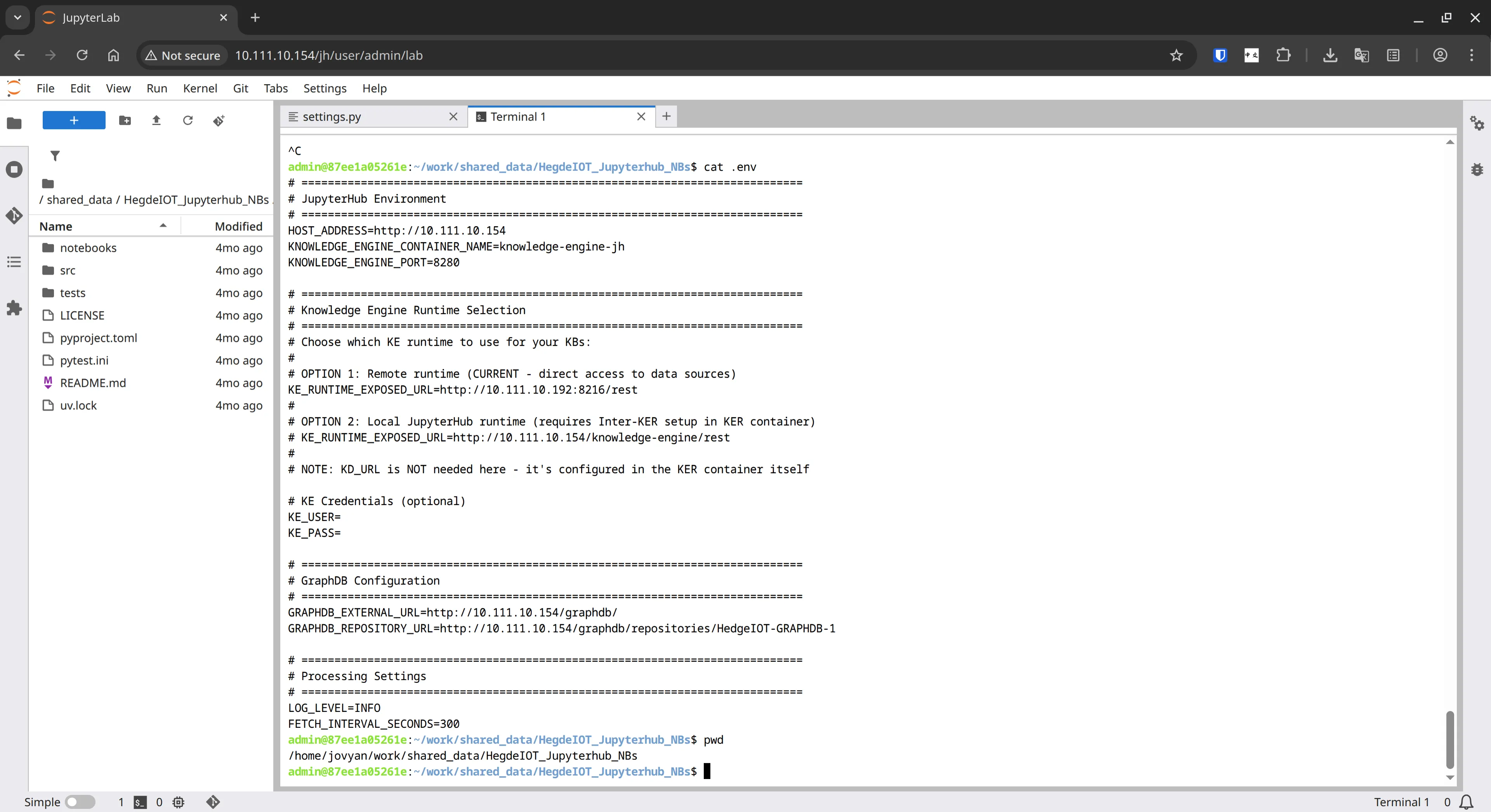

HedgeIOT: Notebook-Driven Knowledge Base Management with GraphDB

For HedgeIOT’s developer-facing interface, I built notebooks that do more than explain concepts — they actively operate the data pipeline.

The result was a notebook-driven Knowledge Base Management System that helps engineers understand and run the stack from one place.

Project Scope

Repo: https://github.com/VU-HedgeIOT/HegdeIOT_Jupyterhub_NBs

Main responsibilities:

- Fetch IoT observations from remote Knowledge Engine runtimes

- Validate and normalize data at the boundary with Pydantic checks

- Transform N3 data into RDF

- Store triples in GraphDB using SAREF-aligned modeling

- Query data with predefined and custom SPARQL queries

What I Built

1) A practical command-center notebook

I designed a central notebook for operational workflows: start/stop knowledge bases, monitor status, and execute data queries in one interface.

2) Data quality gates before persistence

Pydantic validation catches malformed or unexpected values before they reach the knowledge base, improving downstream query reliability.

3) Semantic pipeline from ingestion to querying

I implemented a clear flow from raw observations to graph storage and retrieval, so users can trace exactly how data becomes queryable knowledge.

Example of a generated SPARQL query to retrieve measurements:

PREFIX rdf: <http://www.w3.org/1999/02/22-rdf-syntax-ns#>

PREFIX saref: <https://w3id.org/saref#>

PREFIX xsd: <http://www.w3.org/2001/XMLSchema#>

SELECT *

WHERE {

?meas rdf:type saref:Measurement .

?meas saref:hasValue ?temp .

?meas saref:isMeasuredIn saref:TemperatureUnit .

?meas saref:hasTimestamp ?timestamp .

FILTER (xsd:dateTime(?timestamp) >= {start_timestamp}^^xsd:dateTime && xsd:dateTime(?timestamp) <= {end_timestamp}^^xsd:dateTime)

}Technical Challenges I Solved

- Unified teaching material and operational tooling in the same notebook environment

- Built a robust transformation path from N3 to RDF

- Preserved semantic consistency with SAREF-aligned structures

- Enabled both predefined analytics and ad hoc SPARQL exploration

Outcome

This work made the semantic layer tangible for developers: they could inspect, operate, and query the system directly without context switching across multiple tools.

For my portfolio, this project highlights applied data engineering and knowledge graph experience with strong developer experience design.